This paper describes a list of tentative hypotheses for Heuretics in order to stimulate more research.

Hypothesis 1: Heuretics is a Bona Fide Field of Knowledge, and Merits Investigation by AI.

The standard empirical inquiry paradigm for modern AI research is to test hypotheses about heuristics by constructing programs which use them, and then try to find new ones.

Hypothesis 2: Heuristic Rules have Three Primary Uses

-

To prune away implausible options

-

To propose plausible ones

-

To serve as data for the induction of new heuristic rules

Earlier workers in the field such as Michie, Nilsson Gashnig most heavily studied 1. A genetic program for instance is an example of option 2.

Hypothesis 3: Heuristics can act as plausible 'move' generators, to guide an explorer towards valuable new concepts worthy of attention.

This can be seen as a generalisation problem. E.G., peephole optimisation. g(x) = f(x, x) is a worthy idea to be investigated. E.G., squaring.

Hypothesis 4: The same methodology enables a body of heuristics to monitor, modify, and enlarge itself.

For example, a heuristic that says a concept is sometimes useful and sometimes not, that can apply to itself, because sometimes it is not useful to think that way.

Hypothesis 5: Heuristics are compiled hindsight and draw their power from regularity and continuity in the world.

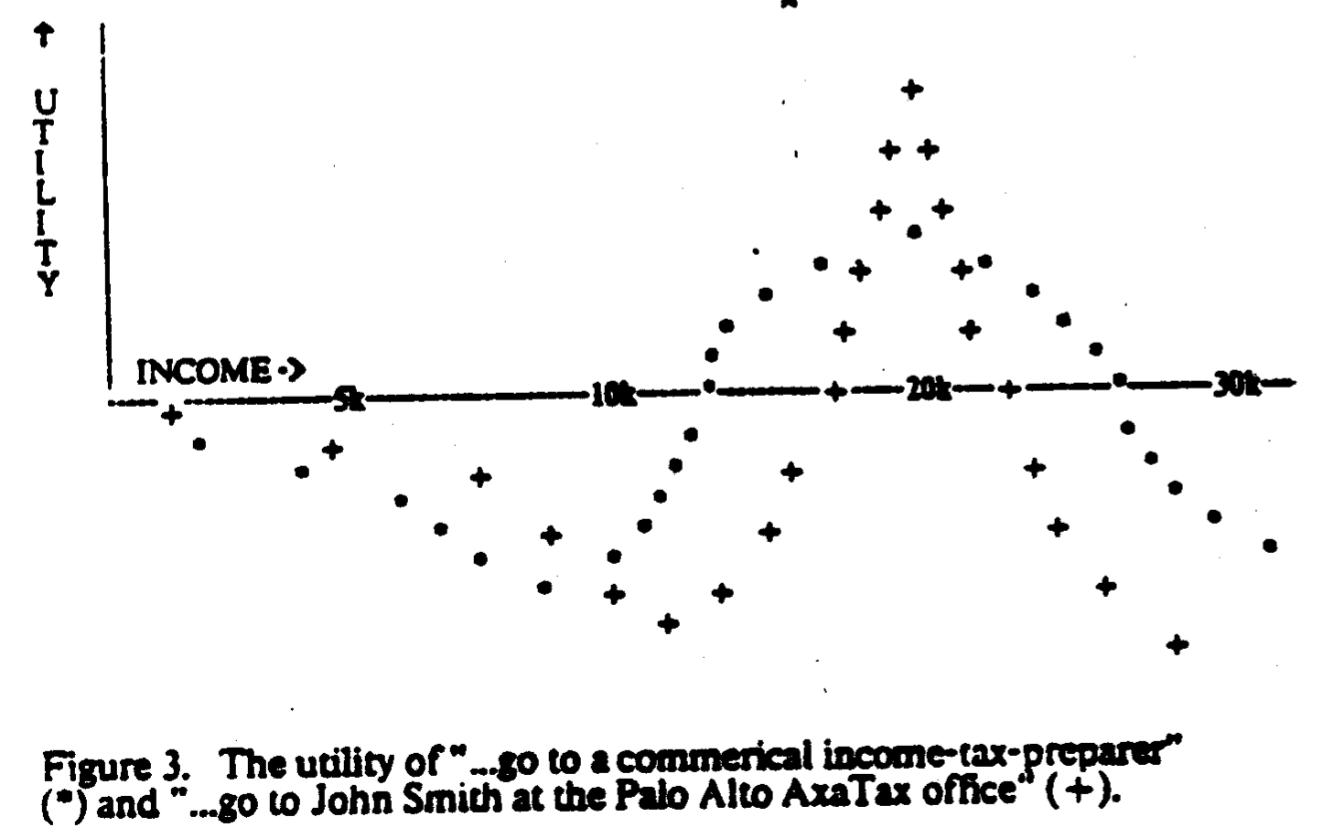

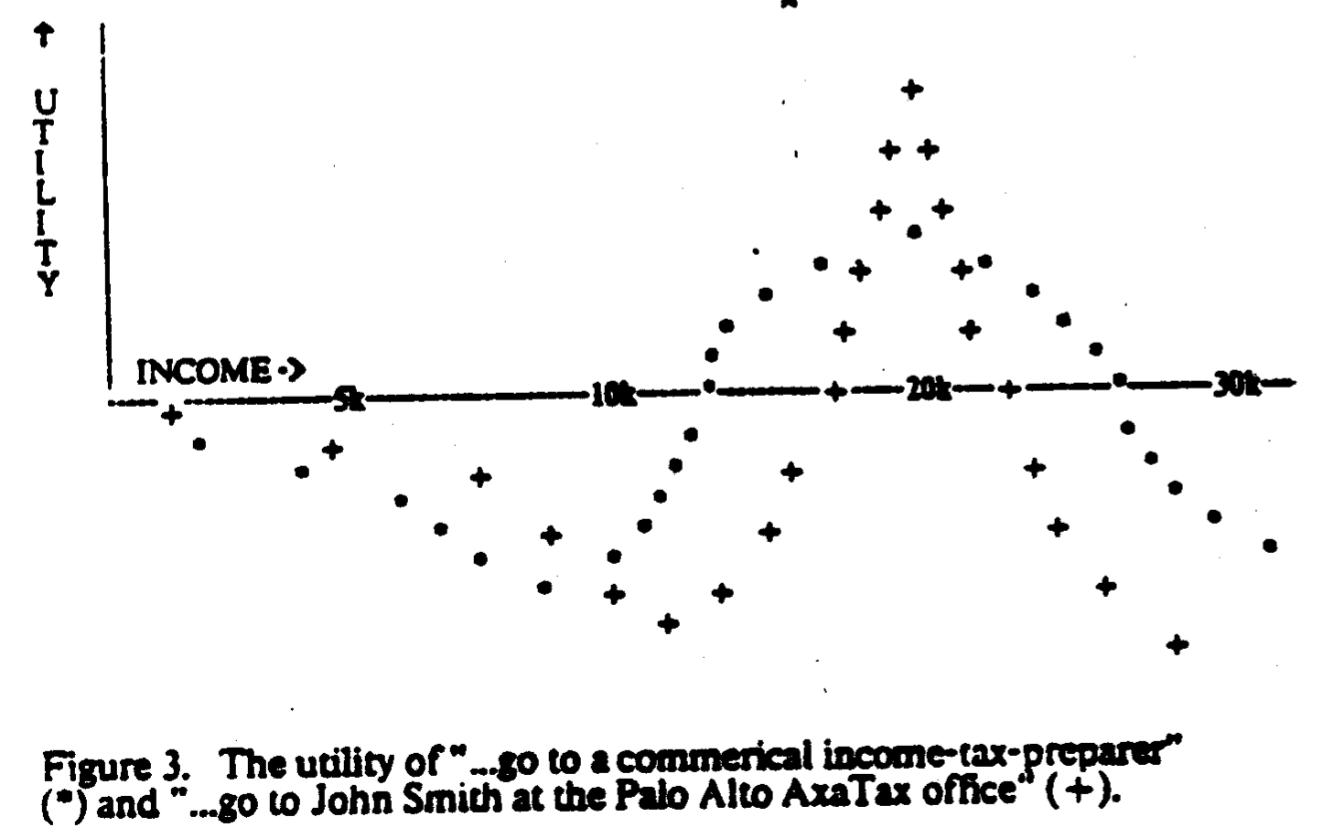

The value of a heuristic can thereby be estimated according to its utility.

The value of a heuristic can thereby be estimated according to its utility.

Hypothesis 6: Generalising and specializing a heuristic often leads to a pathologically extreme new one, but some of those are useful nevertheless.

A common example of this is when a more general heuristic is replaced by a more specific one but there is no associated increase in utility.

Algorithms can be understood as pathologically narrow heuristics that are so powerful that they are guaranteed to be useful

Hypothesis 7: The graph of "all the world's heuristics" is surprisingly shallow.

This means that generalising a heuristic can be a useful technique of investigation. It elevates weak methods to a special status, and means that generalisation and analogy can be more powerful tools than specialisation, as seen in the author's experience with EURISKO.

Hypothesis 8: Even though the world is often discontinuous we usually cannot do any better than rely on heuristics which presume continuity.

There are many measures of appropriateness, and a non-linearity of the situation space amongst all the variable dimensions. Yet it is close to what human experts actually do. It is frequently useful to behave as if the zeroth order theory was true, as a kind of greedy search.

Hypothesis 9: The interrelations among a set of heuristics -- and the internal structure of a single one, *should* be complex.

Before, we saw that we could understand an algorithm as a very specific and complex heuristic. Rather than understanding a heuristic as a single piece it is often a good idea to break it down into parts, such that the heuristic search and generation yields semantically meaningful ideas.

Hypothesis 10: Some very general heuristics can guide search for domain-dependent heuristics.

It is often the case that domains which appear discrete are analagous in many ways, and even the same heuristics can be powerful in both.

Hypothesis 11: Like all fields, Heuretics is accumulating a corpus of heuristics.

In this case, the heuristics are *about* Heuretics guiding research and extracting from experts

Hypothesis 12: Domains which are unexplored internally formalisable, and combinatorially immense are ideal for studying Heuretics.

This makes it possible to test the limits of search, since the field is rich in structure.

The value of a heuristic can thereby be estimated according to its utility.

The value of a heuristic can thereby be estimated according to its utility.